LongVT: Incentivizing "Thinking with Long Videos" via Native Tool Calling

- TL;DR

- The Problem: Why Long Videos Are Hard

- Our Insight: Let Models "Think with Tools"

- Overview

- Why "Native" Tool Calling Matters

- Motivation of VideoSIAH

- The Data Gap

- VideoSIAH: Segment-in-a-Haystack

- Data Pipeline

- Dataset Statistics

- Quantitative Comparisons

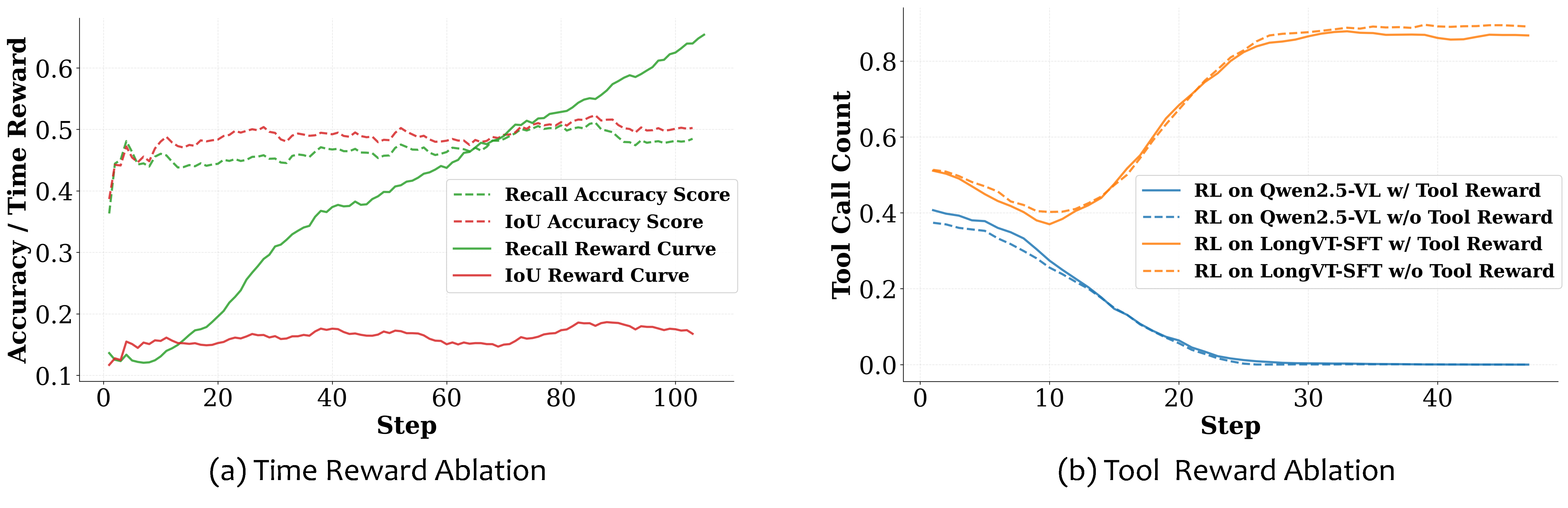

- Ablation Studies

- Data Recipe

- Training Stage

- Training Dynamics

- Key Takeaways & Lessons Learned

- 1. Cold-Start SFT is Crucial for Tool Learning

- 2. The Model Learns *When* to Use Tools

- 3. IoU > Recall for Temporal Grounding Rewards

- 4. Existing Benchmarks Have Contamination Issues

- What's Next?

TL;DR

We teach vision-language models to think like humans when watching long videos: instead of trying to memorize everything at once, the model learns to skim first, then zoom in on relevant moments. This is achieved through a native tool-calling mechanism where the model can invoke crop_video(start, end) to inspect specific clips on demand. The result? A 7B model that outperforms much larger models on long-video understanding tasks.

The Problem: Why Long Videos Are Hard

Imagine watching a 2-hour movie and being asked: "What was the character wearing when they first entered the coffee shop?" Even for humans, this requires a specific strategy: you don't re-watch the entire movie—you mentally locate the relevant scene, then focus on those few seconds.

Current vision-language models don't work this way. They typically:

- Sample frames uniformly across the video (missing critical moments)

- Process everything at once (overwhelming the context window)

- Hallucinate when the answer isn't in the sampled frames

The core issue is that evidence in long videos is sparse and temporally localized. A 2-hour video at 1 FPS gives you 7,200 frames, but the answer to your question might depend on just 3-5 frames buried somewhere in the middle.

Our Insight: Let Models "Think with Tools"

The key insight behind LongVT is simple: instead of forcing models to process everything, let them actively seek evidence.

We introduce a native tool-calling paradigm where the model can:

- Preview the video globally (sparse sampling)

- Hypothesize which time window might contain the answer

- Zoom in by calling

crop_video(start_time, end_time) - Verify or refine based on what it actually sees

This mimics the human cognitive process of global-to-local reasoning. The model isn't just passively processing frames—it's actively investigating.

Overview

Our contributions are threefold:

(1) LongVT: An End-to-End Agentic Framework for "Thinking with Long Videos"

We introduce a novel paradigm that natively interleaves multimodal tool-augmented Chain-of-Thought (CoT) with on-demand clip inspection over hours-long videos, thereby enabling large multimodal models (LMMs) to perform more effective and reliable long-video reasoning.

(2) VideoSIAH: A Fine-Grained Data Suite for Evidence-Sparse Long-Video Reasoning

We construct a scalable data pipeline that produces diverse and high-quality question-answering (QA) data and tool-integrated reasoning traces, and a dedicated benchmark under a video segment-in-a-haystack setting.

(3) LongVT-7B-RFT: A State-of-the-Art Baseline with Invaluable Insights

Through extensive quantitative comparisons, systematic ablations on data recipes, training strategies, and design choices, as well as in-depth analyses of training dynamics, we establish and open-source a powerful baseline model with "thinking with long videos" capabilities.

Interleaved Multimodal Chain-of-Tool-Thought (iMCoTT). Compared to prior text-based CoT reasoning, iMCoTT in our proposed LongVT can natively perform self-reflection via calling crop_video(start_time, end_time) tool. It proposes a time window after a global preview, proactively fetches the corresponding short clip, rethinks based on the new evidence, and determines whether to refine or answer directly. Such tool-augmented reasoning behaviors ground each step in what is actually seen rather than blindly rephrasing in text-only CoT, which mitigates hallucination and leads to enhanced temporal localization and answer correctness.

Why "Native" Tool Calling Matters

You might ask: why not just use an external retrieval system? The key difference is agency. In LongVT:

- The model decides when to call the tool (not every query needs it)

- The model chooses the time window based on its reasoning

- The model integrates the retrieved evidence into its thought process

This is fundamentally different from retrieval-augmented generation (RAG), where retrieval happens before reasoning. In LongVT, retrieval is part of reasoning.

Motivation of VideoSIAH

The Data Gap

Having a good method is only half the battle—you need the right data to train it. We found that existing long-video datasets have two major problems:

-

Coarse-grained annotations: Most datasets have clip-level QAs that don't require precise temporal reasoning. A model can often guess the answer without knowing when something happened.

-

Benchmark contamination: Many multiple-choice benchmarks can be "solved" without even watching the video! Our contamination study (see table below) shows that some models perform well above chance in the "No Visual" setting.

VideoSIAH: Segment-in-a-Haystack

To address this, we created VideoSIAH—a dataset specifically designed for the "needle in a haystack" scenario where the answer depends on a small segment within a long video.

The name captures the challenge: finding the right video Segment In A Haystack of hours-long content.

Key design principles:

- Open-ended questions: No multiple choice to game

- Human validation: Annotators verify that questions truly require temporal localization

- Tool-integrated traces: Training data includes the reasoning process, not just answers

We conduct a rigorous contamination study on the Qwen-VL series across two probing settings: (1) No Visual, where we feed the text prompt without video frames to test for direct memorization; (2) Rearranged Choices, where we randomize the mapping between option labels and their textual content for multiple-choice questions to detect label memorization. Our experimental results reveal significant vulnerabilities in existing benchmarks and highlight the necessity of our proposed VideoSIAH-Eval.

| Setting | VideoMME (w/o sub) | VideoMMMU adapt. | VideoMMMU comp. | VideoMMMU perc. | VideoSIAH-Eval |

|---|---|---|---|---|---|

| Qwen2.5-VL-7B-Instruct | |||||

| Original | 64.3 | 35.7 | 44.3 | 56.7 | 33.8 |

| No Visual | 40.1 | 25.7 | 38.3 | 39.3 | 12.7 |

| Rearranged Choices | 56.0 | 29.7 | 40.3 | 67.0 | - |

| Qwen3-VL-8B-Instruct | |||||

| Original | 69.3 | 40.7 | 60.3 | 71.3 | 46.6 |

| No Visual | 44.1 | 33.7 | 39.3 | 46.7 | 0.00 |

| Rearranged Choices | 69.0 | 36.3 | 47.7 | 69.3 | - |

Contamination Tests for Qwen-VL Series on Long Video Understanding and Reasoning Benchmarks. The VideoSIAH-Eval column shows "-" entries for Rearranged Choices since our proposed benchmark is fully open-ended QA, where random option-answer mapping is not applicable.

Data Pipeline

Data Pipeline of VideoSIAH. We construct a semi-automatic data pipeline that integrates several state-of-the-art LMMs to sequentially perform long video segmentation, video clip captioning, segment-in-a-haystack QA generation, cross-modal QA filtering, and iMCoTT generation. Icons with human silhouettes denote human-in-the-loop validation, where annotators inspect a small set of representative failures to refine prompting rules for QA generation, QA filtering, and iMCoTT generation. Note that iMCoTT traces are generated only for the cold-start supervised fine-tuning (SFT) stage, whereas reinforcement learning (RL) operates solely on the filtered QA pairs.

Dataset Statistics

| Split | Source | Purpose | Samples | Total |

|---|---|---|---|---|

| SFT (w/o tool) | LongVideo-Reason CoT | Reasoning-augmented Open-ended QA | 5,238 | 228,835 |

| Video-R1 CoT | Reasoning-augmented Video QA | 165,575 | ||

| Image-based CoT | Reasoning-augmented Image QA | 58,022 | ||

| SFT (w/ tool) | Gemini-distilled iMCoTT | Tool-augmented Open-ended QA | 12,766 | 19,161 |

| Qwen-distilled iMCoTT | Tool-augmented Temporal Grounding | 6,395 | ||

| RL | Gemini-distilled QAs | Open-ended QA over Long Videos | 1,667 | 17,020 |

| RFT | Self-distilled iMCoTT | Agentic Behaviors | 15,353 |

Dataset Statistics of VideoSIAH. Our proposed dataset contains a large-scale of non-tool SFT data, tool-augmented SFT data, RL QAs, and self-distilled reinforcement fine-tuning (RFT) traces.

Category Distribution of VideoSIAH-Eval. We present the distribution of video types (left) and question types (right), highlighting the diversity of our proposed benchmark.

Quantitative Comparisons

We compare our LongVT models against proprietary LMMs and state-of-the-art open-source video reasoning models across various long video understanding and reasoning benchmarks.

| Model | Reasoning | Tool | VideoMME | VideoMMMU | LVBench | VideoSIAH-Eval | Avg | ||

|---|---|---|---|---|---|---|---|---|---|

| Prompt | Calling | w/ sub | adapt. | comp. | perc. | ||||

| Proprietary LMMs | |||||||||

| GPT-4o | ✗ | ✗ | 77.2 | 66.0 | 62.0 | 55.7 | 30.8 | 17.4 | 51.5 |

| Gemini 1.5 Pro | ✗ | ✗ | 81.3 | 59.0 | 53.3 | 49.3 | 33.1 | - | 55.2 |

| Open-Source (Sparse Sampling) | |||||||||

| Qwen2.5-VL-7B | ✗ | ✗ | 62.6 | 37.3 | 28.0 | 36.7 | 30.7 | 28.1 | 37.2 |

| Video-R1-7B | ✓ | ✗ | 61.0 | 36.3 | 40.7 | 52.3 | 37.2 | 27.9 | 42.6 |

| VideoRFT-7B | ✓ | ✗ | 60.9 | 36.7 | 42.0 | 53.0 | 34.7 | 26.5 | 42.3 |

| Video-Thinker-7B | ✓ | ✗ | 61.0 | 34.3 | 44.7 | 53.0 | 52.2 | 10.4 | 42.6 |

| LongVT-7B-SFT (Ours) | ✓ | ✓ | 12.5 | 37.7 | 46.0 | 58.3 | 36.0 | 26.8 | 36.2 |

| LongVT-7B-RL (Ours) | ✓ | ✓ | 66.1 | 32.7 | 44.7 | 50.0 | 37.8 | 31.0 | 43.7 |

| Open-Source (Dense Sampling) | |||||||||

| Qwen2.5-VL-7B | ✗ | ✗ | 64.3 | 35.7 | 44.3 | 56.7 | 40.9 | 33.8 | 46.0 |

| Video-R1-7B | ✓ | ✗ | 60.5 | 37.3 | 38.7 | 46.3 | 40.1 | 33.1 | 42.7 |

| VideoRFT-7B | ✓ | ✗ | 49.2 | 37.7 | 40.7 | 48.7 | 18.7 | 26.9 | 37.0 |

| Video-Thinker-7B | ✓ | ✗ | 60.8 | 37.7 | 42.7 | 55.3 | 54.3 | 6.6 | 42.9 |

| LongVT-7B-SFT (Ours) | ✓ | ✓ | 64.9 | 32.3 | 42.0 | 49.7 | 41.1 | 34.8 | 44.1 |

| LongVT-7B-RL (Ours) | ✓ | ✓ | 66.1 | 37.7 | 42.3 | 56.3 | 41.4 | 35.9 | 46.6 |

| LongVT-7B-RFT (Ours) | ✓ | ✓ | 67.0 | 35.7 | 43.7 | 56.7 | 41.3 | 42.0 | 47.7 |

Performance Comparison with Existing Video-Centric LMMs across Various Long Video Understanding and Reasoning Benchmarks. The best and second-best results among open-source models in each column are marked in bold and underlined, respectively.

Ablation Studies

We conduct comprehensive ablation studies to examine the impact of data recipes, training stages, and reward design on model performance.

Data Recipe

| Setting | VideoMME | VideoMMMU | LVBench | VideoSIAH-Eval | Avg | ||

|---|---|---|---|---|---|---|---|

| w/ sub | adapt. | comp. | perc. | ||||

| SFT w/o self-curated iMCoTT | 8.4 | 33.6 | 41.6 | 46.0 | 15.1 | 4.1 | 24.8 |

| SFT w/ self-curated iMCoTT | 64.9 | 32.3 | 42.0 | 49.7 | 41.1 | 34.8 | 44.1 |

| RL w/o self-curated QAs | 55.1 | 30.6 | 42.0 | 45.6 | 38.4 | 30.8 | 40.4 |

| RL w/ self-curated QAs | 66.1 | 37.7 | 42.3 | 56.3 | 41.4 | 35.9 | 46.6 |

Training Stage

| Setting | VideoMME | VideoMMMU | LVBench | VideoSIAH-Eval | Avg | ||

|---|---|---|---|---|---|---|---|

| w/ sub | adapt. | comp. | perc. | ||||

| SFT only | 64.9 | 32.3 | 42.0 | 49.7 | 41.1 | 34.8 | 44.1 |

| RL only | 52.7 | 35.3 | 43.0 | 55.1 | 37.1 | 28.2 | 41.9 |

| SFT+RL | 66.1 | 37.7 | 42.3 | 56.3 | 41.4 | 35.9 | 46.6 |

| SFT+RL+RFT | 67.0 | 35.7 | 43.7 | 56.7 | 41.3 | 42.0 | 47.7 |

Training Dynamics

(a) shows training dynamics under different accuracy and time rewards, and (b) shows the effect of tool-call reward on tool usage.

Recall encourages coverage; IoU demands precision. Using Recall as the reward function during RL presents a drawback: the policy can enlarge the predicted span to envelop the ground-truth interval, which monotonically raises the Recall-based score while ignoring boundary quality. This plateau in the curve of Recall Accuracy Score validates our hypothesized reward hacking. In contrast, IoU explicitly penalizes span inflation via the union term, yielding better-aligned boundaries and more disciplined tool use.

Is tool reward really necessary? The Qwen2.5-VL-7B baseline collapses to near-zero tool calls after training in both configurations (w/ and w/o tool reward), indicating that the model does not internalize the tool's function. After performing cold-start SFT to obtain LongVT-7B-SFT, tool-call frequency rises during training under both configurations and accuracy improves in tandem. Hence, the tool reward is not required for basic competence: once SFT grounds the tool's semantics, the model learns when and how to invoke the tool.

Key Takeaways & Lessons Learned

Through this project, we gained several insights that might be valuable for future work:

1. Cold-Start SFT is Crucial for Tool Learning

One surprising finding was that you can't just RL your way to tool use. Without the cold-start SFT phase that demonstrates tool semantics, the model never learns to call tools—even with explicit tool rewards. This suggests that tool-using behaviors need to be shown before they can be optimized.

2. The Model Learns When to Use Tools

After SFT, we observed that the model doesn't blindly call tools for every query. It develops an intuition for which questions require zooming in (fine-grained visual details) versus which can be answered from the global preview (general scene understanding). This emergent selectivity wasn't explicitly trained—it arose naturally from the data.

3. IoU > Recall for Temporal Grounding Rewards

When we first tried Recall-based rewards, we saw the model "gaming" the metric by predicting increasingly wide time windows. Switching to IoU forced the model to be precise about boundaries. This is a concrete example of how reward design shapes agent behavior.

4. Existing Benchmarks Have Contamination Issues

Our contamination study revealed that some models perform surprisingly well on long-video benchmarks even without seeing the video. This motivated us to create VideoSIAH-Eval with open-ended questions that can't be gamed through memorization.

What's Next?

LongVT opens up several exciting directions:

- Multi-round tool calling: Currently, the model typically calls the tool once. Enabling iterative refinement could help with even more complex queries.

- Tool diversity: Beyond

crop_video, we could add tools for object tracking, scene search, or even external knowledge retrieval. - Longer videos: While we tested on hour-long videos, the paradigm should scale to even longer content like full TV series or lecture archives.

We're excited to see how the community builds on this work!

Open-Source Resources

We open-source LongVT to facilitate future development of long-video reasoning with tool calling in the community